How to Preserve the Source IP of Requests After Load Balancing in a K8s Cluster

Categories:

Introduction

Application deployment is not always just simple installation and running; sometimes network issues need to be considered as well. This article will introduce how to enable services in a K8s cluster to obtain the source IP of requests.

Applications providing services generally rely on input information. If the input information does not depend on the five-tuple (source IP, source port, destination IP, destination port, protocol), then the service has low network coupling and does not need to care about network details.

Therefore, most people have no need to read this article. If you are interested in networking or want to broaden your horizons, you can continue reading to learn about more service scenarios.

This article is based on K8s v1.29.4. Some descriptions in the article mix pod and endpoint, which can be considered equivalent in this context.

If there are any errors, please point them out, and I will correct them promptly.

Why is Source IP Information Lost?

First, let’s clarify what the source IP is. When A sends a request to B, and B forwards the request to C, although C sees B’s IP as the source IP in the IP protocol, this article considers A’s IP as the source IP.

There are mainly two types of behaviors that cause source information to be lost:

- Network Address Translation (NAT), aimed at saving public IPv4 addresses, load balancing, etc. This causes the server to see the IP of the NAT device as the source IP, not the real source IP.

- Proxy, Reverse Proxy (RP) and Load Balancer (LB) all fall into this category, collectively referred to as proxy servers below. These proxy services forward requests to backend services but replace the source IP with their own IP.

- NAT is simply trading port space for IP space. IPv4 addresses are limited, and one IP address can map to 65,535 ports. In most cases, these ports are not fully used, so multiple subnet IPs can share one public IP, distinguished by ports. Its usage form is:

public IP:public port -> private IP_1:private port. For more details, please refer to Network Address Translation. - Proxy services are for hiding or exposing. Proxy services forward requests to backend services while replacing the source IP with their own IP to hide the real IP of the backend services and protect their security. The usage form of proxy services is:

client IP -> proxy IP -> server IP. For more details, please refer to Proxy.

NAT and proxy servers are very common, and most services cannot obtain the source IP of requests.

These are the two common ways to modify the source IP. Supplements for others are welcome.

How to Preserve the Source IP?

Here is an example of an HTTP request:

| Field | Length (Bytes) | Bit Offset | Description |

|---|---|---|---|

| IP Header | |||

Source IP |

4 | 0-31 | Sender’s IP address |

| Destination IP | 4 | 32-63 | Receiver’s IP address |

| TCP Header | |||

| Source Port | 2 | 0-15 | Sending port number |

| Destination Port | 2 | 16-31 | Receiving port number |

| Sequence Number | 4 | 32-63 | Identifies the byte stream sent by the sender |

| Acknowledgment Number | 4 | 64-95 | If ACK flag is set, it is the next expected sequence number |

| Data Offset | 4 | 96-103 | Number of bytes from the start of data relative to TCP header |

| Reserved | 4 | 104-111 | Reserved field, unused, set to 0 |

| Flags | 2 | 112-127 | Various control flags, such as SYN, ACK, FIN, etc. |

| Window Size | 2 | 128-143 | Amount of data the receiver can accept |

| Checksum | 2 | 144-159 | Used to detect if data errors occurred during transmission |

| Urgent Pointer | 2 | 160-175 | Position of urgent data that the sender wants the receiver to process ASAP |

| Options | Variable | 176-… | May include timestamps, maximum segment size, etc. |

| HTTP Header | |||

| Request Line | Variable | …-… | Includes request method, URI, and HTTP version |

Header Fields |

Variable | …-… | Contains various header fields, such as Host, User-Agent, etc. |

| Empty Line | 2 | …-… | Used to separate header and body sections |

| Body | Variable | …-… | Optional request or response body |

Looking at the above HTTP request structure, it can be seen that TCP options, request line, header fields, and body are variable. Among them, the TCP options space is limited and generally not used to pass source IP. The request line carries fixed information that cannot be extended. The HTTP body cannot be modified after encryption. Only HTTP header fields are suitable for extension to pass source IP.

You can add the X-REAL-IP field in the HTTP header to pass the source IP. This operation is usually performed on the proxy server, and then the proxy server sends the request to the backend service, which can obtain the source IP information through this field.

Note that the proxy server must be before the NAT device to obtain the real request’s source whoami. We can see the Load Balancer product category in Alibaba Cloud, which has a different position in the network from ordinary application servers.

K8s Operation Guide

Deploy using the whoami project as an example.

Create Deployment

First, create the service:

apiVersion: apps/v1

kind: Deployment

metadata:

name: whoami-deployment

spec:

replicas: 3

selector:

matchLabels:

app: whoami

template:

metadata:

labels:

app: whoami

spec:

containers:

- name: whoami

image: docker.io/traefik/whoami:latest

ports:

- containerPort: 8080

This step creates a Deployment containing 3 Pods, each pod containing one container running the whoami service.

Create Service

You can create a NodePort or LoadBalancer type service for external access, or create a ClusterIP type service for cluster-internal access only, and then add an Ingress service to expose external access.

NodePort can be accessed via NodeIP:NodePort or through Ingress service, which is convenient for testing. This section uses NodePort service.

apiVersion: v1

kind: Service

metadata:

name: whoami-service

spec:

type: NodePort

selector:

app: whoami

ports:

- protocol: TCP

port: 80

targetPort: 8080

nodePort: 30002

After creating the service, access with curl whoami.example.com:30002, and you will see that the returned IP is NodeIP, not the source whoami of the request.

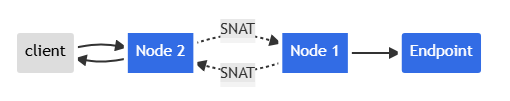

Please note that this is not the correct client IP; they are internal IPs of the cluster. Here’s what happens:

- Client sends packet to node2:nodePort

- node2 replaces the packet’s source IP address with its own IP address (SNAT)

- node2 replaces the packet’s destination IP with Pod IP

- Packet is routed to node1, then to the endpoint

- Pod’s reply is routed back to node2

- Pod’s reply is sent back to the client

Illustrated with a diagram:

Configure externalTrafficPolicy: Local

To avoid this situation, Kubernetes has a feature to preserve the client source IP. If you set service.spec.externalTrafficPolicy to Local, kube-proxy will only proxy requests to local endpoints and will not forward traffic to other nodes.

apiVersion: v1

kind: Service

metadata:

name: whoami-service

spec:

type: NodePort

externalTrafficPolicy: Local

selector:

app: whoami

ports:

- protocol: TCP

port: 80

targetPort: 8080

nodePort: 30002

Test with curl whoami.example.com:30002. When whoami.example.com resolves to IPs of multiple nodes in the cluster, there is a certain probability of access failure. You need to ensure the domain records only contain the IP of the node where the endpoint (pod) is located.

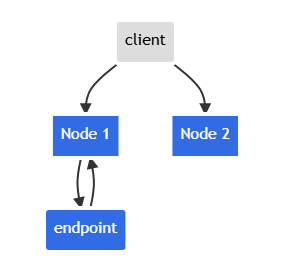

This configuration comes at a cost: it loses the cluster-wide load balancing capability. Clients will only get a response when accessing nodes where endpoints are deployed.

When the client accesses Node 2, there will be no response.

Create Ingress

Most services provided to users use HTTP/HTTPS. The form https://ip:port may feel unfamiliar to users. Generally, Ingress is used to load the NodePort service created above to port 80/443 under a domain name.

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: whoami-ingress

namespace: default

spec:

ingressClassName: external-lb-default

rules:

- host: whoami.example.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: whoami-service

port:

number: 80

After applying, test access with curl whoami.example.com, and you will see that the ClientIP is always the Pod IP of the Ingress Controller on the node where the endpoint is located.

root@client:~# curl whoami.example.com

...

RemoteAddr: 10.42.1.10:56482

...

root@worker:~# kubectl get -n ingress-nginx pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

ingress-nginx-controller-c8f499cfc-xdrg7 1/1 Running 0 3d2h 10.42.1.10 k3s-agent-1 <none> <none>

Using Ingress as a reverse proxy for the NodePort service means adding two layers of services in front of the endpoint. The diagram below shows the difference between the two.

graph LR

A[Client] -->|whoami.example.com:80| B(Ingress)

B -->|10.43.38.129:32123| C[Service]

C -->|10.42.1.1:8080| D[Endpoint]

graph LR

A[Client] -->|whoami.example.com:30001| B(Service)

B -->|10.42.1.1:8080| C[Endpoint]

In Path 1, when externally accessing Ingress, the traffic first reaches the Ingress Controller endpoint, and then reaches the whoami endpoint.

The Ingress Controller is essentially a LoadBalancer service.

kubectl -n ingress-nginx get svc

NAMESPACE NAME CLASS HOSTS ADDRESS PORTS AGE

default echoip-ingress nginx ip.example.com 172.16.0.57,2408:4005:3de:8500:4da1:169e:dc47:1707 80 18h

default whoami-ingress nginx whoami.example.com 172.16.0.57,2408:4005:3de:8500:4da1:169e:dc47:1707 80 16h

Therefore, you can preserve the source IP by setting the aforementioned externalTrafficPolicy on the Ingress Controller.

Additionally, you need to set use-forwarded-headers to true in the configmap of ingress-nginx-controller so that the Ingress Controller can recognize the X-Forwarded-For or X-REAL-IP fields.

apiVersion: v1

data:

allow-snippet-annotations: "false"

compute-full-forwarded-for: "true"

use-forwarded-headers: "true"

enable-real-ip: "true"

forwarded-for-header: "X-Real-IP" # X-Real-IP or X-Forwarded-For

kind: ConfigMap

metadata:

labels:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.10.1

name: ingress-nginx-controller

namespace: ingress-nginx

The main difference between NodePort service and ingress-nginx-controller service is that the backend of NodePort is usually not deployed on every node, while the backend of ingress-nginx-controller is usually deployed on every node exposed externally.

Unlike setting externalTrafficPolicy on NodePort service, which causes cross-node requests to have no response, Ingress can first set the HEADER and then proxy and forward the request, achieving both source IP preservation and load balancing capabilities.

Summary

- Address Translation (NAT), Proxy, Reverse Proxy, and Load Balancing can cause source IP loss.

- To prevent source IP loss, the real IP can be set in the HTTP header field

X-REAL-IPwhen the proxy server forwards, passed through the proxy service. If using multi-layer proxies, theX-Forwarded-Forfield can be used, which records the source IP and proxy path IP list in a stack form. - Setting

externalTrafficPolicy: Localon cluster NodePort services preserves source IP but loses load balancing capability. - Under the premise that ingress-nginx-controller is deployed as a daemonset on all loadbalancer role nodes, setting

externalTrafficPolicy: Localpreserves source IP while retaining load balancing capability.